Klara Inc

Improve the way you make use of ZFS in your company.

Did you know you can rely on Klara engineers for anything from a ZFS performance audit to developing new ZFS features to ultimately deploying an entire storage system on ZFS?

ZFS Support ZFS DevelopmentAdditional Articles

Here are more interesting articles on ZFS that you may find useful:

- Why ZFS Is the Ideal Filesystem for Multi-User/Department Media Production

- Which ZFS Storage Metrics Matter for Database Performance

- How Klara and TrueNAS collaborated to fix one of ZFS’s longest standing limitations

- Safe ZFS Tuning Practices for Production Databases

- Fast Dedup Economics When Deduplication Beats Buying New Disks

ARC and L2ARC sizing for Proxmox is fundamentally a capacity planning problem rather than a performance tuning exercise. The size of the ARC determines the frequency with which ZFS must fall back to accessing physical storage for read requests; L2ARC, when deployed, determines whether additional SSD bandwidth can improve cache hit ratios without consuming excessive RAM for index metadata. Misconfiguration typically manifests as unpredictable latency, guest memory pressure, and degraded performance under load. Correct configuration produces deterministic behavior and reproducible scaling characteristics across nodes in a cluster.

ARC Sizing for Proxmox: Budget-First Approach

ZFS demands memory, and so do hypervisor guests. Proxmox's documentation recommends a baseline of approximately 2 GiB plus 1 GiB per TiB of storage capacity and defaults to capping ARC at 10% of RAM (16 GiB maximum). This baseline is extremely defensive rather than optimal.

A sound process when sizing the ARC for proxmox follows this sequence:

- Define a fixed RAM allocation for guests and the Proxmox control plane. For example, on a 128 GiB node, reserve approximately 96 GiB for virtual machines and Proxmox processes, leaving 32 GiB available for ZFS and auxiliary system services.

- Derive an explicit ARC cap from the remaining memory budget. Within the 32 GiB available in the above example, allocate 24 GiB to ARC and reserve the remaining 8 GiB for the kernel page cache, networking, and background services. Configure this cap via the zfs_arc_max parameter in /etc/modprobe.d/zfs.conf:

options zfs zfs_arc_max=25769803776 # 24 GiB

Rebuild the initramfs and reboot per Proxmox documentation.

3. Optionally establish a minimum ARC allocation via zfs_arc_min to prevent the kernel from reclaiming ARC memory too aggressively during transient memory pressure events. A typical pattern sets the minimum at 1/3rd of the maximum value:

options zfs zfs_arc_min=8589934592 # 8 GiB

options zfs zfs_arc_max=25769803776 # 24 GiB

This approach ensures deterministic memory allocation: adding virtual machines does not inadvertently reduce ARC, and expanding storage capacity does not automatically consume guest memory.

Indicative ARC Sizing for Proxmox by Node Capacity

Field observations in Proxmox and ZFS deployments suggest practical ranges for ARC allocation:

- Nodes with 64 GiB RAM: ARC cap of 12–16 GiB, preserving approximately 48 GiB for guest workloads.

- Nodes with 128 GiB RAM: ARC cap of 24–48 GiB, the exact value depending on guest density and the I/O intensity of workloads.

- Nodes with 256 GiB or greater RAM: ARC cap of 64–128 GiB, with explicit measurement to validate that both ARC and L2ARC (if deployed) are balanced appropriately.

These values are not targets in isolation but rather components of a sizing methodology anchored in the node's infrastructure role and the aggregate working sets of its hosted guests. The main factors are the amount of memory allocated to the guests, and the amount of data that is frequently used that will most benefit from being resident in the ARC.

L2ARC: Preconditions and Index Overhead

L2ARC extends the ARC layer by capturing soon to be discarded blocks on SSD or NVMe cache vdevs. Conceptually, it allows a node to maintain a working set larger than available RAM while still avoiding full I/O operations being sent to slower pool vdevs, particularly those using HDD-based RAID-Z configurations. In practice, L2ARC provides measurable benefits only when multiple preconditions are met:

- The working set is larger than ARC but exhibits stable temporal locality, meaning a bounded portion of the pool is accessed repeatedly.

- The L2ARC cache devices deliver materially higher bandwidth and lower latency than the main pool vdevs.

- Current ARC hit ratios are already high (exceeding approximately 85%), indicating efficient use of existing RAM cache capacity.

- Sufficient ARC capacity is available to maintain L2ARC index metadata without displacing actively accessed (hot) data from the primary cache.

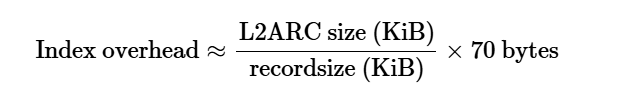

The final condition is frequently overlooked, but it is essential to proper L2ARC sizing. Every block stored in L2ARC requires an index entry maintained in ARC memory. The magnitude of this index overhead is a function of the total L2ARC size and the dataset recordsize. A typical approximation for the overhead is expressed as:

For a 1 TiB L2ARC deployment with a 32 KiB recordsize (typical for virtual machine workloads), the resulting index consumes approximately 2240 MiB of ARC memory. The amount of space required for L2ARC indexes is highly dependent on recordsize. With the Proxmox default zvol block size of 8 KiB, 1 TiB of L2ARC will require 8960 MiB of ARC, whereas with the default filesystem recordsize of 128 KiB, it would only take 571 MiB. On a node with a constrained ARC allocation—for example, 8 to 16 GiB—this index represents a substantial fraction of the cache. If ARC is already stressed by guest memory competition, this additional metadata pressure can reduce the proportion of hot data retained in RAM, lowering cache hit ratios and potentially degrading overall system performance.

For this reason, Klara's guidance recommends limiting L2ARC capacity such that the headers will consume no more than 1/3rd of the ARC cap. A node with a 24 GiB ARC cap, for example, should not typically deploy more than 920 GiB (8 KiB records) to roughly 15 TiB (128 KiB records) of aggregate L2ARC capacity.

Deployment Patterns in Proxmox Environments

Small to Medium Virtualization Nodes (32–128 GiB RAM)

On smaller Proxmox nodes, ARC is generally more cost-effective than L2ARC, and SSD-based pool topologies would not see much performance benefit from L2ARC. Typical characteristics of such deployments:

- Pool topology: multiple SSD mirror vdevs for primary VM storage, optionally augmented with a mirrored NVMe SLOG or metadata device for workloads that generate frequent synchronous write operations.

- ARC configuration: explicit cap in the 12–24 GiB range, with the majority of node memory reserved for guest virtual machines.

- L2ARC deployment: typically omitted, because the working set either fits adequately within ARC or the SSD backing the pool is already fast enough that the incremental benefit would be marginal relative to the index overhead cost.

In this class of deployment, incremental investment in additional SSD capacity for the primary pool typically yields more predictable performance gains than introducing L2ARC complexity.

Large Virtualization Nodes (256+ GiB RAM)

On larger systems with substantial memory capacity and consolidated workloads, L2ARC becomes more strategically valuable, provided that ARC is already appropriately sized and workloads demonstrate usable working sets larger than available RAM.

Typical deployment pattern:

- ARC configuration: cap of 64–96 GiB, derived from the per-node memory budget and validated through cache performance metrics.

- L2ARC deployment: 2.4–60 TiB of enterprise-grade NVMe, configured as cache vdevs and maintaining the index metadata within the 1/3rd of ARC size guideline.

- Pool design: SSD mirrors for latency-sensitive workloads, HDD RAID-Z for capacity-oriented datasets and archival storage.

Deployments of this scale benefit from a disciplined measurement process: recording ARC hit ratios, I/O latency, and IOPS before L2ARC introduction, then re-evaluating the same metrics after a representative workload period to quantify actual performance improvements.

Capacity-Oriented Nodes (Archive and Backup)

For archival or backup-focused nodes where workloads are dominated by large sequential I/O operations, L2ARC provides limited performance benefit. Sequential access patterns are better served by the ZFS prefetcher and may bypass cache layers entirely, and available infrastructure resources are better allocated toward vdev topology design and, where required, SLOG devices for synchronous workloads. In this role, a modest ARC configuration (8–16 GiB) without L2ARC is typically sufficient.

Measurement and Validation

Effective deployment of L2ARC requires empirical validation before commitment. The recommended process includes:

- Measure ARC hit ratios before L2ARC deployment using tools such as arcstat or arc_summary on the Proxmox host.

- If hit ratios exceed approximately 90%, L2ARC is unlikely to provide benefit; the working set already fits adequately in RAM.

- If hit ratios fall in the 70–80% range with observable cache thrashing during peak load, L2ARC may provide measurable improvement.

- After L2ARC deployment, re-measure hit ratios, I/O latency, and IOPS after 1–2 weeks of representative workload to validate actual improvement.

- If measurement shows no improvement, remove L2ARC; continuous feed operations consume SSD bandwidth and endurance for no operational benefit.

Key metrics to monitor during validation:

- Hit ratio: target greater than 85% for well-tuned deployments.

- Miss ratio: each miss represents a full I/O operation with associated latency penalty.

- L2ARC read rate: sustained high read rates may indicate L2ARC thrashing or undersizing of ARC or L2ARC capacity.

- Eviction rate: high eviction rates under load suggest ARC capacity is insufficient for the working set.

L2ARC in 2026

With the price of RAM set to continue rising through 2026, adding an L2ARC to extend the cache on new or existing hypervisor deployment is becoming more and more compelling. With proper ARC sizing for Proxmox, and a proportionally sized L2ARC, some workloads can see significant performance gains.. With high performance enterprise NVMe devices costing 60-100x less per TiB than RAM, the trade-off seems simple, but there are many factors that go in to determining if it is the right choice.

Klara’s ZFS Storage Design solution provides the expertise to ensure you design your hypervisor environment to maximize the benefits of all your hardware, from the CPU and RAM, to L2ARC and metadata vdevs, and the primary storage, be that NVMe, SSD, or HDDs.